The AI You Dismissed Isn't the AI That's Coming

Anthropic CEO Dario Amodei recently said AI could automate large parts of software engineering within the next 6 to 12 months.

The reactions were predictable.

Some people mocked it. Some people dismissed it. Some people nodded quietly, because they’ve seen what’s already possible.

I’ve been watching this split for over a year now. In my circle: friends, family, former colleagues, I keep seeing the same pattern. Someone tries AI, has a mediocre experience, and writes the whole thing off. “It’s overhyped.” “It can’t do real work.” “It’s just autocomplete.”

And here’s the thing: they’re not wrong about the AI they used.

They’re just not talking about the same AI.

The Gap Isn’t Belief. It’s Experience.

Six months ago, a friend told me AI coding tools were “useless for anything real.” I asked what he’d tried. It was a free-tier model from 2023.

Meanwhile, I was shipping code with Claude Opus 4.0.

We weren’t having a disagreement about AI. We were living in different realities.

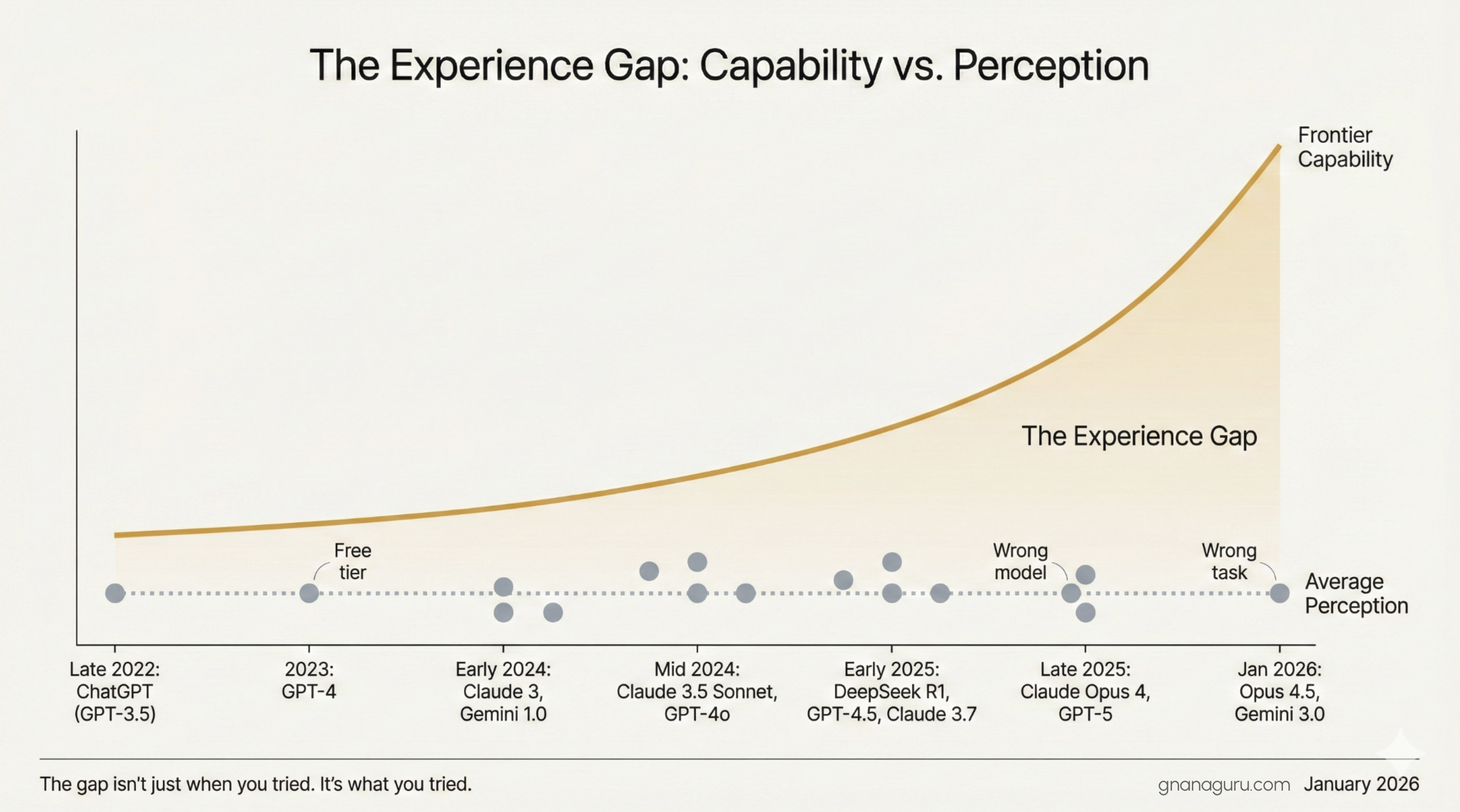

This keeps happening. Someone makes a confident claim ”AI can’t do X” and when you dig in, they’re either using a free tier, the wrong model for the task, or they formed that opinion months ago and never revisited it. The technology moved. They didn’t.

And here’s the thing: this isn’t just about people who tried AI in 2023. I see people trying AI today, January 2026 and still forming outdated opinions. Because they used Haiku for complex reasoning. Or asked a general assistant to write production code. Or tried the free tier once and walked away.

The gap isn’t just about when you tried. It’s about what you tried.

I saw a comment recently that captured this perfectly. Someone responding to AI skepticism wrote: “Six months ago, I would have dismissed this as ridiculous. Then Claude Opus 4.5 - Tyler Jensen”

That’s it. That’s the whole pattern. The gap between dismissal and acceptance often isn’t about intelligence, or openness, or willingness to adapt. It’s about whether you’ve actually touched the frontier.

Why the Gap Exists

This isn’t a judgment. It’s just reality.

Cost. Frontier models aren’t free. A Claude Pro subscription, GPT-5 access, the latest reasoning models, these cost money. Not everyone can justify the expense, especially if they tried a free model once and it underwhelmed them.Geography. Access isn’t equal everywhere. Payment methods, regional restrictions, employer policies, there are real barriers depending on where you are.Awareness. If you’re not actively tracking this space, you might not even know that the models from three months ago are already obsolete. The pace is disorienting. What was frontier in mid-2025 feels dated by January 2026.Inertia. First impressions stick. If your first experience with AI coding assistance was frustrating, you’re not rushing back to try again. That’s human. But it also means your mental model is frozen in time.Mismatch. This one’s subtle but common. Someone tries a general-purpose model for a specialized coding task. Or uses a fast, lightweight model for complex reasoning. Or asks an assistant optimized for conversation to architect a system. The tool wasn’t wrong, it was wrong for that job. Different models have different strengths. Opus 4.5 thinks differently than Sonnet. Sonnet thinks differently than Haiku. Using the wrong model for the wrong purpose and concluding “AI doesn’t work” is like using a screwdriver as a hammer and blaming the tool.

Shifting landscape. Even if you matched the right model to the right task six months ago, that match might be wrong today. Model strengths shift with every major release. What GPT4 was best at in mid-2025 isn’t necessarily what GPT5 excels at now. OpenAI’s strengths versus Gemini’s strengths keep trading places. The leaderboard reshuffles quarterly. Keeping up isn’t optional, it’s the price of having a valid opinion.

None of these make someone stupid or stubborn. They just create a lag. And that lag is widening.

The Uncomfortable Part

Here’s what makes this hard to talk about.

The people dismissing AI aren’t delusional. They’re accurately describing their experience. The free-tier model was frustrating. The 2023 assistant did hallucinate constantly. The code it generated was mediocre.

But the conversation has moved on. The models have moved on. And if your opinion was formed on old technology, it’s not really an opinion about AI anymore. It’s an opinion about history.

That’s fine for casual dinner conversation. It’s a problem if you’re making career decisions, hiring decisions, or strategic bets based on it.

The gap isn’t between optimists and skeptics. It’s between people with current experience and people with outdated experience. And right now, a lot of confident opinions are being broadcast by people who haven’t touched a frontier model in the last 90 days.

What’s Actually Happening

Let me be clear about what I’m not saying.

I’m not saying everyone’s job disappears tomorrow. I’m not saying AI is magic. I’m not saying the skeptics are fools.

What I am saying: the trajectory is real, and it’s faster than most people’s intuitions suggest.

Inside companies that have adopted frontier models seriously, the workflow has already changed. Engineers are spending less time writing code from scratch and more time reviewing, guiding, and architecting. The work hasn’t disappeared, it’s shifted upward.

That’s not a threat. That’s an upgrade.

The people I know who’ve embraced this aren’t worried about being replaced.

The people I know who’ve embraced this aren’t worried about being replaced. They’re too busy building things that would have taken them three times as long a year ago. They’ve moved from implementation to direction. From typing to thinking. From executing to designing.

That’s the future that’s available, if you’re paying attention.

The Opportunity in the Gap

Here’s the part that excites me.

If half the industry is operating on outdated assumptions, that’s an advantage for anyone who isn’t. While others debate whether AI “really works,” you can be shipping. While others wait for certainty, you can be learning.

The experience gap is temporary. Models will get cheaper. Access will expand. Eventually, everyone will catch up.

But right now? Right now, there’s a window. The people who close the gap first, who actually sit down with a frontier model and push it will have a head start that compounds.

Not because they believed harder. Because they experienced sooner.

The Invitation

If you’re skeptical about AI, I’m not here to convince you.

I’m here to ask you a few questions:

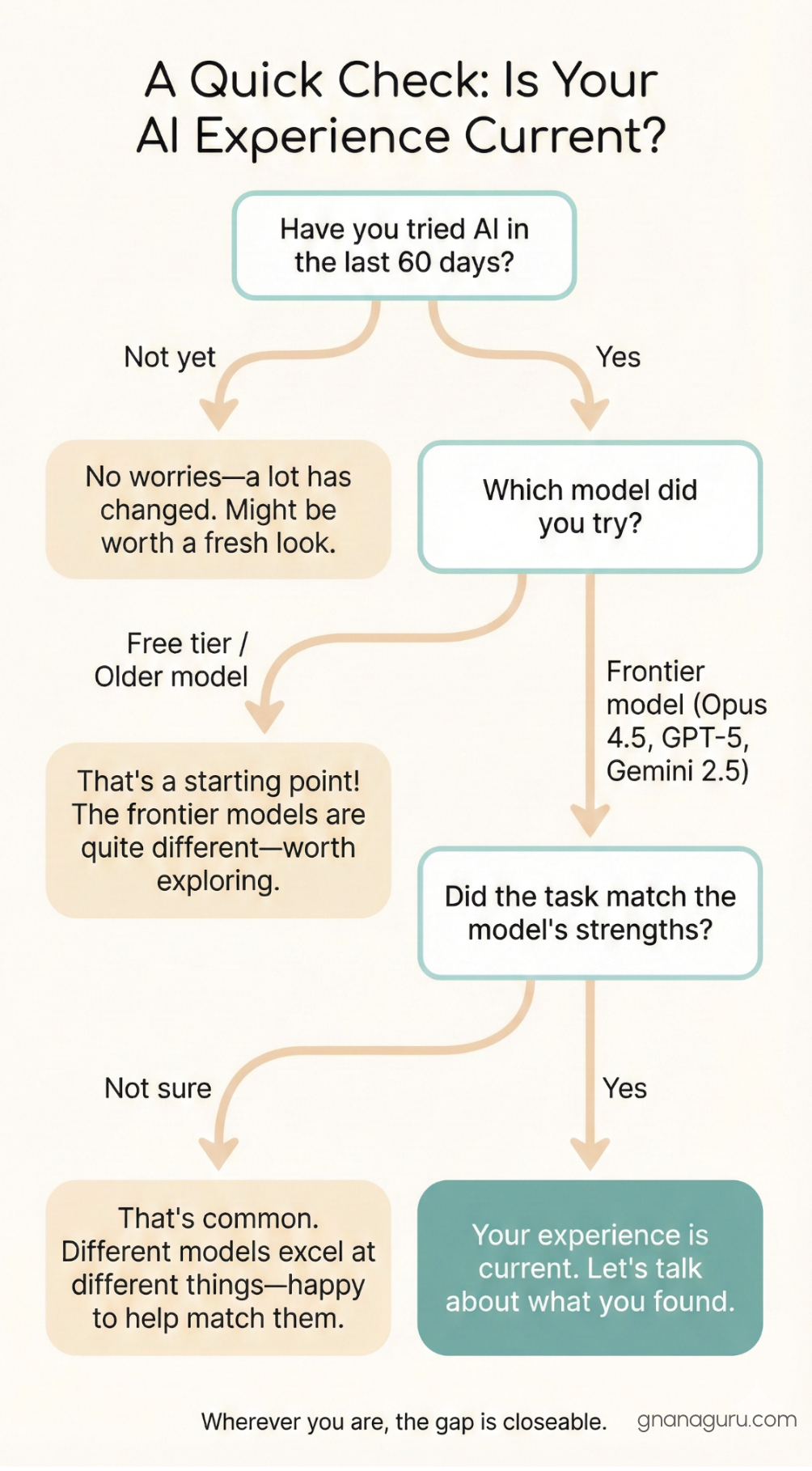

When was the last time you actually tried it? Not six months ago, recently. The last 60 days.

If you tried it recently, which model did you use? The free tier? A 2024 model? Or the actual frontier, Opus 4.5, GPT-4 Turbo, Gemini Ultra?

If you used a frontier model, what did you try to build? A quick demo, or something real? A task that plays to the model’s strengths, or something it was never designed for?

Did you use a reasoning model for a reasoning task? A coding model for code? Or did you ask a general assistant to do specialized work and conclude it “doesn’t get it”?

These questions matter. Because if the answer is “I tried ChatGPT free tier once in 2024 and it made stuff up,” then your skepticism isn’t about AI. It’s about something that no longer represents the frontier.

The gap is closeable. The future is more interesting than the past. And the best way to form an opinion is to have an experience worth forming one on.